Multi-Perspectives

Overview

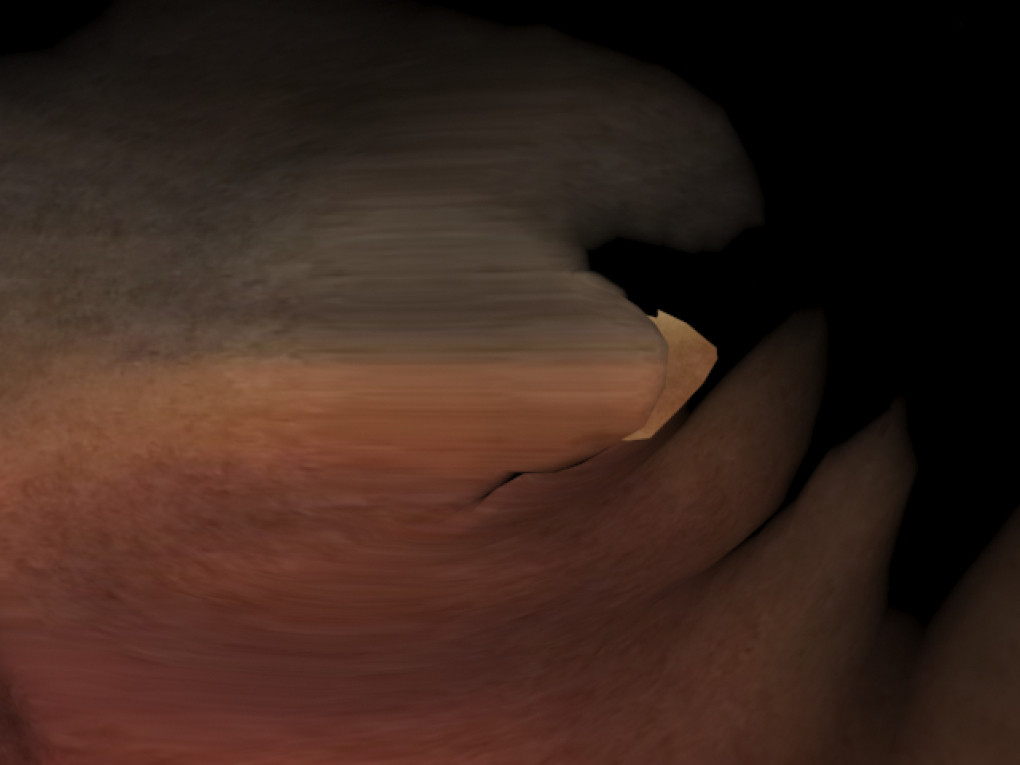

Multi‑Perspectives is a cross‑disciplinary collaboration between an abstract landscape painter and a computer scientist specialising in skull anatomy and 3D geometric modelling. The project will map painted textures onto realistic skull geometries, produce virtual views, and translate selected virtual snapshots back into new physical paintings. This iterative, retro‑feedback process will investigate how digital shape, texture and lighting can extend artistic composition, and how the resulting dialogue between virtual and material work reshapes audience perception of creative labour.

Aims

- Explore how procedural mapping of an original painting onto complex 3D anatomy generates new visual grammars.

- Develop a studio workflow where virtual manipulations inform new physical artworks.

- Test how audiences respond to material ↔ virtual dialogues and whether exposure to process deepens appreciation.

Method & Tasks

Source & prepare assets

- Create initial paintings by the artist.

- Prepare high‑resolution skull models (synthetic 3 Million‑polygon models; later extension to extract realistic shapes from MR volumes via collaborator’s medical‑data pipeline).

Texture mapping & virtual exploration

- Map painting textures to skull surfaces using 3D packages (3ds Max, MeshLab) and in‑house OpenGL tools.

- Generate manipulated mappings (segmentation, exaggerated strokes, remapped colour fields) and render snapshots from multiple viewpoints and lighting conditions.

Iterative painting practice

- Select virtual snapshots; artist will produce new paintings that reflect emergent 3D compositions.

- Re‑digitise these new paintings to feed into subsequent mapping rounds (closing the feedback loop).

Interaction & evaluation

- Build a lightweight interactive display (projected or MR demo) to let audiences toggle views, compare stages and capture reactions.

- Collect qualitative (interviews, studio diaries) and quantitative (interaction logs, survey ratings) data.

Stages & Timeline (9 months)

- Months 1–2: Asset creation and pipeline set‑up (paintings, model prep, software).

- Months 3–4: Texture mapping experiments and render library.

- Months 5–6: Iterative painting rounds and refinements.

- Month 7: Fabrication of exhibited works and interactive build.

- Months 8–9: Public testing (gallery/studio sessions & online deployment), evaluation, final documentation.

Benefits to Artists & Collaboration

- Artists will gain hands‑on skills in photoreal mapping, spatial compositing and iterative digital workflows—enabling new compositional strategies.

- The scientist-scene will test anatomical modelling techniques in an aesthetic context, opening translational research routes (visualisation methods, educational tools).

- The partnership will model a sustainable practice for hybrid studio‑lab collaborations, fostering local networks and future projects.

Audience Impact & Perception

- By revealing process—mapping, manipulation and re-materialisation—audiences will see artistic decision‑making as layered and contingent, increasing empathy for labour and intention.

- Interactive encounters will allow viewers to probe how shape and texture affect emotion and meaning; evaluation will measure shifts in appreciation and interpretive depth.

Outputs & Testing Scenarios

- Outputs: a small exhibition (up to 2 finished paintings + projected/MR displays), a web‑accessible interactive gallery of renders, process documentation, and an evaluation report.

- Testing: studio walkthroughs with artists/students, public gallery sessions with recorded interactions and surveys, and remote testing via web 3D viewers to collect wider feedback.

Resources & Extensions

- Tools: 3ds Max, MeshLab, OpenGL/Unity for rendering, high‑res scanners/cameras, compute/GPU time, SLA/FDM printers for scaled models.

- Extensions: incorporate skulls derived from MR data (ethical clearance required), spatial audio/lighting for immersive display, or a workshop series to train early‑career artists in these techniques.

Multi‑Perspectives will demonstrate how algorithmic shape and painterly texture can co‑author work, creating a productive feedback loop that expands creative practice, scientific visualisation, and audience understanding.

Related work and References

- Salas, M. and S.C. Maddock “Craniofacial reconstruction based on skull-face models extracted from MRI datasets”. The Eighth Theory and Practice of Computer Graphics 2010 Conference (TP.CG.2010) (pp 143-150). Sheffield, 6 September 2010 – 8 September 2010

- Salas, M. and Maddock, S. (2009), “Extracting skull-face models from MRI data for use in craniofacial reconstructions”, Workshop on Face Behaviour and Interaction (FBI 2009), Manchester Metropolitan University, Manchester, UK, August 25th, 2009

- Salas, M. and S.C. Maddock, “Segmenting the External Surface of a Human Skull in MR Data”, TPCG’08 The sixth Theory and Practice of Computer Graphics 2008 Conference, University of Manchester, UK, 9-11th June 2008

- Salas, M. and S. Maddock, “Segmenting the external surface of a human skull in MRI data by adding shape information to gradient vector flow snakes”, Department of Computer Science Research Memorandum CS-07-13, University of Sheffield

[/vc_column_text]